By Belle Moyers

When talking about scientific issues, the phrase “Correlation doesn’t imply causation” is sometimes thrown around. But what does it mean? Science makes statements about cause and effect. Smoking causes lung cancer. Carbon emissions cause climate change. Higher temperatures cause increased violence. Clearly, scientists have some way of inferring causal relationships. But how do they grapple with the idea that “Correlation doesn’t imply causation”? If they don’t use correlation, what tools do they use to infer causation?

As an example, when ice cream sales increase, so does the number of shark attacks. In other words, ice cream sales correlate with shark attacks. But does this mean that ice cream sales cause shark attacks? Of course not. The “Ice cream causes shark attacks” argument is a classic example of a logical fallacy. People will sometimes satirize this argument because it’s painfully obvious that there is not a cause and effect relationship.

Plausible mechanisms

Almost any time the “Ice cream causes shark attacks” example is brought up, the immediate response is something like “No, no—hot days make people visit the beach. At the beach, you’re more likely to both buy ice cream and to get attacked by a shark. See, the correlation between these two variables is actually explained by the hot, sunny days.” But there’s a problem. This response says “Shark attacks are correlated with hot days. Ice cream consumption is correlated with hot days. Hot days cause both of these things.” Someone should be shouting, “Hey! Correlation doesn’t imply causation!”

Why is it that we think it’s okay to infer causation in one case, but not in the other? One factor is that there’s a plausible mechanism provided. By plausible mechanism I mean a rational explanation that ties together two or more observations. Based on what we know about how people behave and how sharks behave, we can tell a believable story about how ice cream sales, shark attacks, and heat all correlate. Connecting these dots in a rational way is part of how scientists get from correlation to causation.

But that’s not enough. After all, we can come up with a plausible mechanism for why ice cream consumption would cause shark attacks. We could say “Sharks have a particularly good sense of smell. Perhaps ice cream smells good to them. When a person eats ice cream and gets into the water, sharks smell the ice cream and dart directly towards that person.” What’s more, both explanations could be true (ice cream and shark attacks, or hot days and shark attacks). Clearly, more is needed to imply causation. In order to infer causation, we have to confirm the connection between each step in our story. If we used the explanation at the beginning of this paragraph, we would need to confirm that sharks can smell ice cream and that when they smell ice cream they swim towards it. We connect the dots in our explanations by experiments.

Experimental Design

When we have to pick between multiple plausible mechanisms—or we want to try to confirm a single mechanism—we perform one or more experiments. The field of Experimental Design is an area of statistics which helps researchers plan and interpret experiments. How we design an experiment depends on many things, including how many mechanisms we want to test and how many subjects we have. (“Subjects” could be people, days, mice, or any other thing that you’re observing.) If we have too few subjects and too many explanations to test, some experiments are impossible.

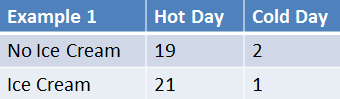

In our example, there are two factors we’re interested in testing: ice cream consumption and hot days. For this experimental design, we need four groups of subjects. Group one would not eat ice cream on a hot day at the beach. Group two would eat ice cream on a hot day at the beach. Group three would not eat ice cream on a cold day at the beach. Group four would eat ice cream on a cold day at the beach. Then we’d count up the number of shark attacks that happened in each of the four groups. There are many possible outcomes to this experiment, but let’s go through three examples as an illustration. In each of the following tables, the numbers show how many shark attacks happened in each group.

In example 1, you can see that on hot days, there appear to be a lot more shark attacks than on cold days. This happens whether or not ice cream is being eaten. So, if this were the result of our experiment, we would conclude that “Hot days are correlated with shark attacks, but cold days aren’t.” Of course, we couldn’t just say that based on the table alone. These results would be interpreted using statistics. The statistics would take into account all kinds of things like the number of subjects (people) in each group and how many shark attacks each group had. Researchers use statistics, like the correlation coefficient and the p-value, to be more confident that their observations aren’t due to random chance*.

In example 2, you can see that when ice cream is eaten there are many more shark attacks than when ice cream is not eaten. This happens whether the day is hot or cold. If this were the result of our experiment, we would conclude that “Ice cream consumption correlates with shark attacks.” Assuming, of course, the statistics support that conclusion.

In example 3, shark attacks only happen when it’s both hot and when people consume ice cream. You can see that shark attacks are low unless the day is both hot and ice cream is being eaten. This is referred to as a first-order interaction, which means that two factors (hot days and ice cream) have a different effect when they’re together than when they’re separate. We might say something like “Shark attacks are higher on hot days when ice cream is being consumed.” But finding a plausible mechanism to explain the finding might take a bit more effort. Maybe it would be “Ice cream melts faster than it can be eaten on hot days. Melted ice cream falls into the ocean and attracts sharks, which then attack people.”

From these examples, it seems like we might have a way to infer causality. If the numbers support our story, then we can say there’s a causal relationship. But there are two major problems with inferring causality, even from very good experiments. 1) In order to rule out all possible explanations and interactions, the required number of experimental subjects can become astronomical. It’s often not practical or possible to rule everything out. 2) Even if you had enough data, all interpretations are based on statistics, like a correlation. So there’s always a chance, however slim, that your results are simply by chance.

The “bad” news

The message is we can never infer causation! Or, at least, there will always be problems with inferring causation. Our statistical tests only tell us about what is or isn’t likely. It’s always possible there is some other explanation to test or that an experimental result is by chance. So, is the search for knowledge hopeless? Does any experiment matter? Is science useless?

As with all philosophical problems, you have to find an answer yourself. My take is that science isn’t useless. Even though it’s technically possible that ice cream causes shark attacks, there is a lack of evidence to support that possibility and a lot of evidence to support the idea that hot days cause shark attacks. Unless someone finds a land-shark ruthlessly tracking down ice-cream eaters (goodbye, Ben & Jerry’s!), it would be a pretty silly to think that eating ice cream increases your chances of death by shark.

To take a real-world example, we can’t assume that all of our knowledge of rocket science acts in a causal way, i.e. that fuel burns through a carefully-shaped nozzle causing an upward thrust that pushes the rocket through the atmosphere and into orbit. Still, as my good aeronautical engineering friend says, “Yeah, but we still make rocket ships.” In science and engineering, as in the rest of life, practical assumptions supported by evidence win out over philosophical problems—no matter how real and important those problems are.

Practical assumptions about causality have been a catalyst for science, engineering, and medicine for the past few thousand years. If someone suggested that DNA wasn’t a major cause of inherited traits because we can’t truly prove causality, they’d get some pretty strange looks! The same goes for smoking causing lung cancer, massive blood loss causing death, circular electron flow causing magnetic fields, and a number of other scientifically-supported causal relationships. So, even though causation always comes with a bit of uncertainty, science does a pretty good job of discovering causal relationships that make sense and work in the day-to-day life of scientists and others.

But how can a person know when it’s okay to assume causality in some big scientific problem? That’s not an easy thing to do. When a person understands enough about how a field works or has enough practical experience with an issue, they become better at knowing when it’s okay to infer causality. This is why people spend years of their life studying biology, physics, sociology, or engineering. Shortcuts aren’t easy to find.

One way you can check for causation is using the Bradford Hill Criteria, a list of minimum requirements for a causal relationship to be true. You have to keep in mind, though, that even if a relationship meets these criteria it doesn’t guarantee causality. It’s only that if a relationship doesn’t meet the criteria, it’s almost certainly not causal. Beyond that, the best recommendation I can give is to trust the expert consensus on a topic, or improve your knowledge of a field through study—particularly of statistics*, since it’s a major part of how scientists infer causality in almost every field. It’s a messy and possibly unsatisfying answer, but it’s how science works.

*Author’s note: Statistics is a big topic, and we couldn’t adequately dive into the details in this post. Until a post can be dedicated to the statistics of experiments, I encourage you to check out this series on statistics published by Nature.

About the author

Our second co-founder, Logistics Coordinator, and Senior Editor, Belle Moyers, is a doctoral student in the Bioinformatics program at the University of Michigan. Belle’s research focuses on methodological problems in molecular evolution, and correctly inferring information from data. In other words, her research sheds light on problems with the methods commonly used in the field of Evolutionary Biology so that improvements can be made. Belle holds degrees in Biology and Psychology from Purdue University. Her interests are in science and education issues, philosophy of science, and the intersection of science and business. Outside of science, Belle enjoys reading, running, hiking, and brewing/consuming beer.

Read more from Belle here.

2 thoughts on “Science behind-the-scenes: Correlation and causation”